In this article, we’ll go into the details of CMS deployment in a failover cluster.

Theoretics

Basically, there are 3 types of CMS deployment:

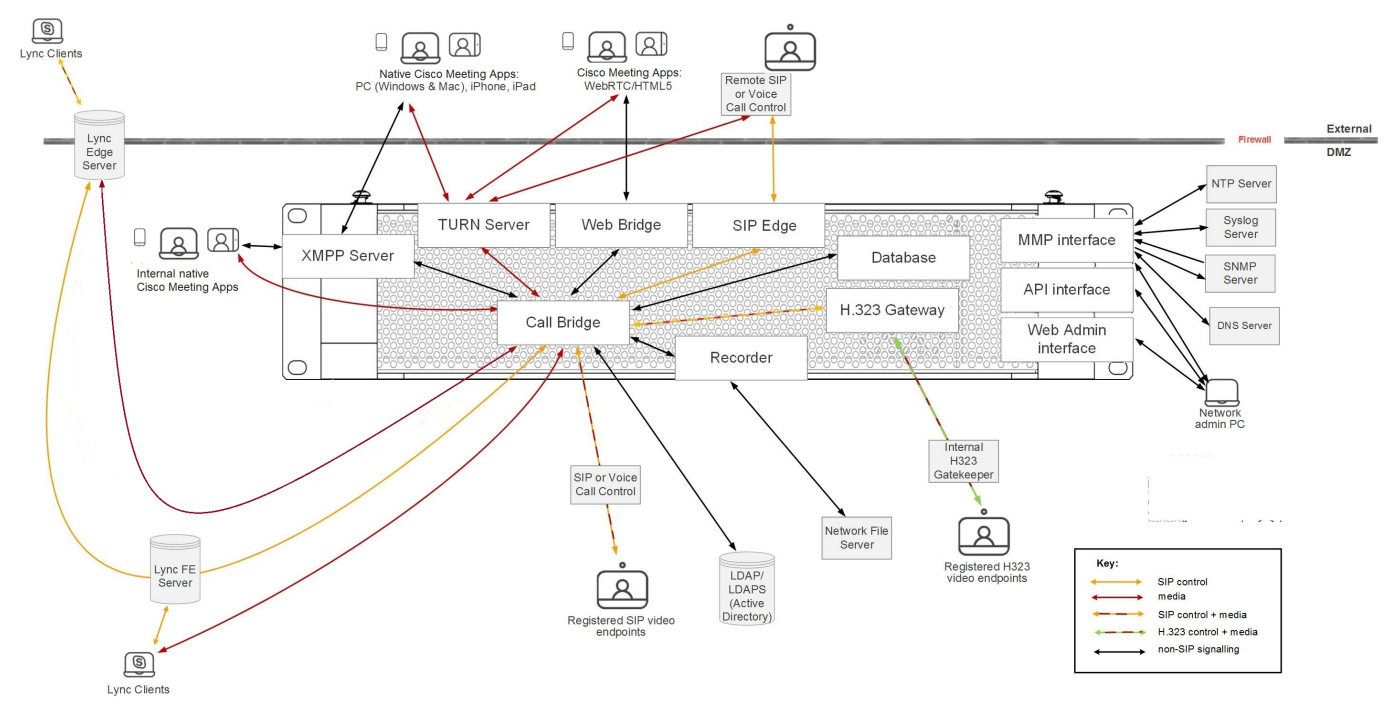

- Single Combined with all services running on a single server. In most cases, this deployment type is only applicable for systems with internal clients, or for small environments with scalability and redundancy restrictions not being crucial, or for the situation when CMS only carries out certain functions, such as CUCM special conferences.

Approximate working scheme:

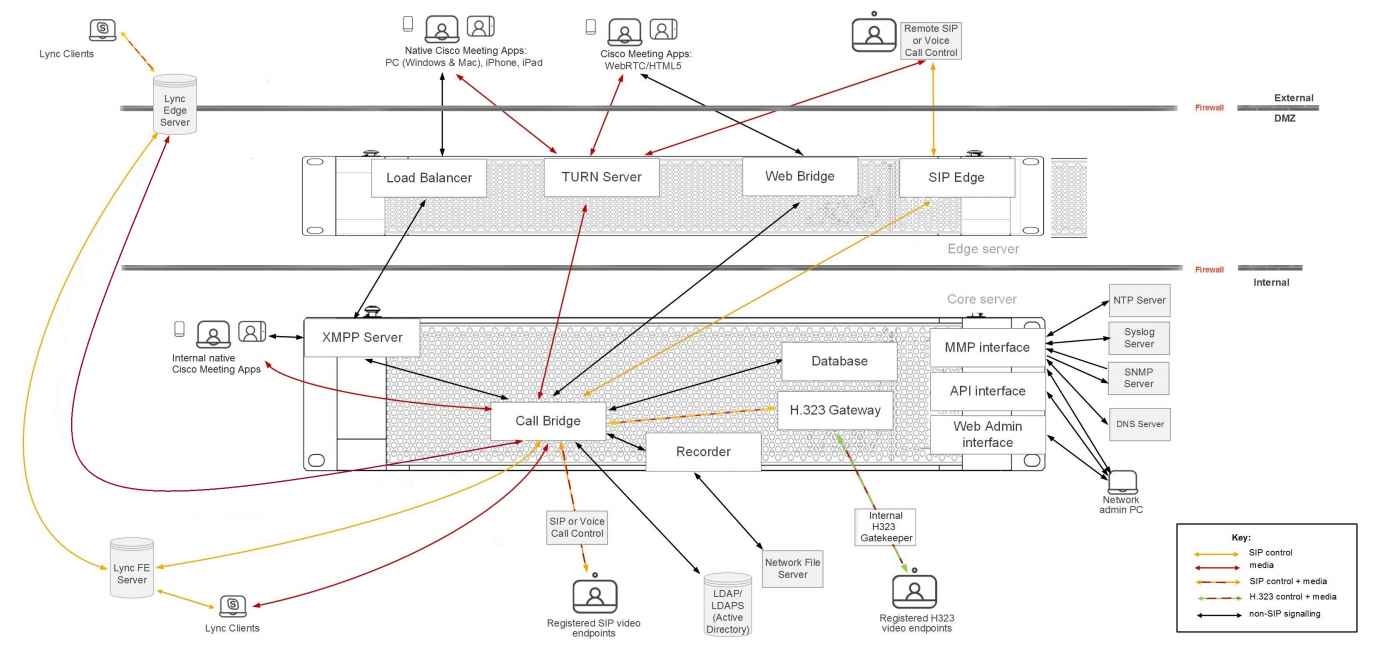

- Single Split extends the previous deployment type with a separate server for external access. In outdated deployments this meant having CMS server deployed in DMZ (demilitarized zone) where external clients can access it, and another CMS server in core layer for the local clients. This specific deployment model is being replaced with so-called Single Edge type with Cisco Expressway servers that have (or will have) most firewall bypassing techniques, so clients won’t have to add a separate CMS edge server.

Approximate working scheme:

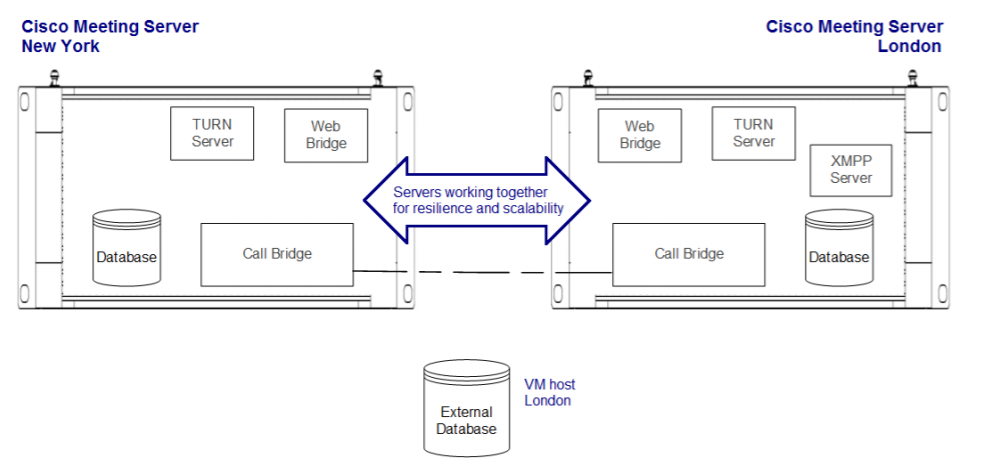

- Scalable and Resilient type includes redundancy for each component, so the system can grow along with your needs up to the maximum capacity, providing redundancy in case of a failure. It also uses Single Edge conception to provide safe external access. This is the type we are going to discuss here. If we know how to deploy this type of cluster, not only will we understand other deployment types, but we also will be able to understand how to create CMS server clusters taking into account the potential requirement increases.

Before we discuss the deployment, we should clarify some basic ideas.

Basic CMS Program Components

- Database: helps to combine some configurations, such as subscriber groups, user spaces and users. Supports high availability clustering only (one master).

- Call Bridge: audio and video conferencing service that implements full control and processing of calls and multimedia processes. Supports high availability and scalable clustering.

- XMPP server: controls registration and authentication for the clients that use Cisco Meeting Application and/or WebRTC (real-time communication, used in browser), and intercomponent signaling. Supports high availability clustering only.

- Web Bridge: provides WebRTC access for the clients.

- Load balancer: provides a unified connection point for Cisco Meeting Applications in Single Split mode. It listens on an external interface and port for incoming connections. The load balancer also accepts incoming TLS connections from an XMPP server and transfers incoming TCP connections from external clients. It won’t be used in our scenario.

- TURN server: provides firewall bypassing technique that allows launching our CMS behind a Firewall or NAT with external clients using Cisco Meeting App or SIP devices. It won’t be used in our scenario.

- Web Admin: administration interface and API access (for Unified CM special conferences as well).

Configuration Approaches

Unlike most Cisco products, Cisco Meeting Server supports 3 configuration approaches, allowing you to perform any deployment type:

- Command line interface (CLI), also known as MMP, can be used for initial configuration and certificate management.

- Web admin: mostly for Call Bridge-related configuration tasks, especially for the configuration of a single non-clustered server.

- REST API: for the most complicated configuration tasks and for clustered database-related tasks.

Besides that, you can use SFTP protocol for transferring files, including licenses, certificates and logs, to/from your CMS server.

Practice

Cisco deployment guides say in cold print that in the context of databases, a cluster must consist of 3 nodes at least, since the new database Master selection mechanism can only work with an odd number of nodes:

Note: It's recommended to have an odd number of DB cluster nodes as it is important for the master selection and the active failover mechanism. Another reason for this is that the master DB node would be the node that has connections to the most of the DB in the cluster. You can have a maximum of 5 nodes in a DB cluster.

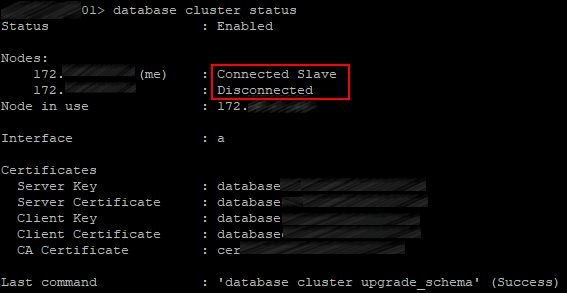

Indeed, as practice shows, 2 cluster nodes are not enough. The master selection snaps into action at the Master reset, and a Slave server becomes Master only after a rebooted server launches. However, if a Master server goes down in a cluster of two servers, then the Slave won’t become a Master. And if the Slave goes down, then the remaining Master will become a Slave, too.

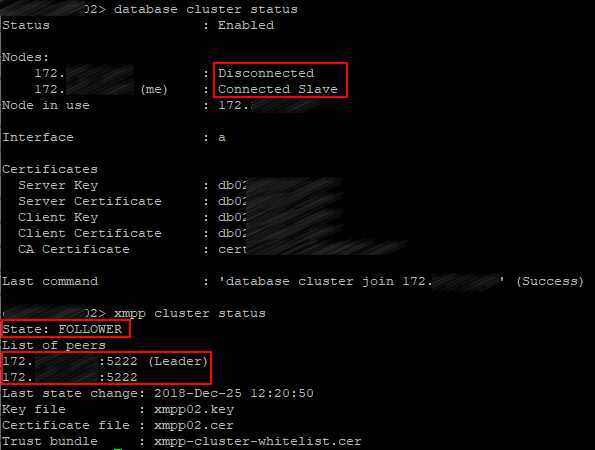

Speaking of XMPP, a cluster should consist of 3 servers, indeed. If you stop XMPP service on the server that has XMPP in Leader status, then XMPP will stay in Follower status on the remaining server, and Call Bridges will be disconnected, because a Call Bridge can only connect to XMPP with Leader status. And this is critical, because no calls will go through.

These deployment guides also show a cluster with a single XMPP server.

In view of the aforementioned, it’s clear: it works in failover mode.

In our case, XMPP server will be running on all three nodes.

Suppose that all three servers are up.

DNS Records

Before we proceed to the servers’ configuration, the following A and SRV DNS records must be created:

| Record type | Record | Description |

A | servername-01.example.com servername-02.example.com servername-03.example.com | Resolving the name of each server in our cluster into its IP address. |

| A | join.example.com join.example.com join.example.com | Three identical records resolved into the corresponding servers’ IP addresses. Users can enter this DNS name in a browser to access a conference from web. |

SRV | _xmpp-client._tcp.conf.example.com _xmpp-client._tcp.conf.example.com _xmpp-client._tcp.conf.example.com | Three identical records referring to A records: servername-0x.example.com, allowing the servers’ port 5222. Cisco Meeting App clients use these records to find XMPP server. |

SRV | _xmpp-component._tcp.conf.example.com _xmpp-component._tcp.conf.example.com _xmpp-component._tcp.conf.example.com | Three identical records referring to A records: servername-0x.example.com, allowing the servers’ 5223 port. Call Bridges use these records to detect XMPP server, if it is not defined explicitly. |

Note that our DNS records have 2 domains: example.com and conf.example.com. Example.com can be used in URIs by all subscribers of Cisco Unified Communication Manager (which most likely is or will be used in your infrastructure). Or: example.com is the same domain that is used for email addresses. Or: Jabber client on your notebook can have the following URI: user@example.com.

Conf.example.com is the domain to be configured for all Cisco Meeting Server users. The Cisco Meeting Server domain will be conf.example.com, and Jabber users should use user@conf.example.com URI to log it to Cisco Meeting Server.

Basic Configuration

The configuration process will be described for a single server, but it should be performed for each server in a cluster.

QoS

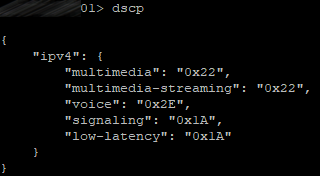

Since CMS produces real-time traffic that is sensitive to delays and packet loss, in most cases it’s recommended to set up quality of service (QoS). CMS supports marking packets with DSCP (differentiated services code points). DSCP-based traffic prioritizing depends on the way the traffic is processed by the network components in your infrastructure. We are going to configure our CMS with typical DSCP prioritizing that is based on best QoS practices.

Enter the following commands on each server:

dscp 4 multimedia 0x22

dscp 4 multimedia-streaming 0x22

dscp 4 voice 0x2E

dscp 4 signaling 0x1A

dscp 4 low-latency 0x1A

So, all video traffic will be marked with AF41 (DSCP 0x22), all voice traffic will be marked with EF (DSCP 0x2E), other low-latency traffic types, such as SIP and XMPP, will be marked with AF31 (DSCP 0x1A).

Let’s check:

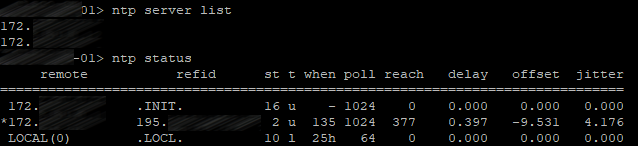

NTP

Not only is network time protocol (NTP) important for providing precise timestamps for the calls, but also for certificate verification.

Use the following command to add your NTP servers:

ntp server add <server>

In our case, there are two of them, so the command will be executed twice.

Let’s check:

Set the time zone for your server.

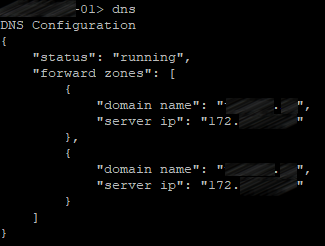

DNS

Use the following command on CMS to add DNS servers:

dns add forwardzone <domain-name> <server ip>

In our case, there are two of them, so the command will be executed twice.

Let’s check:

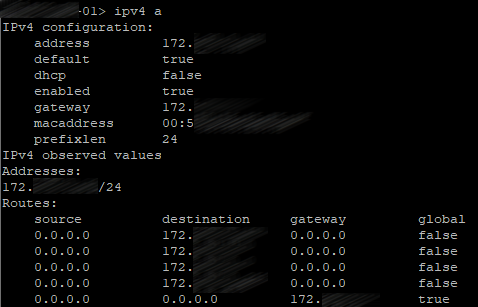

Network Interface Configuration

Use the following command to create a network interface:

ipv4 <interface> add <address>/<prefix length> <gateway>

Let’s check:

Hostname

Use the following command to set the hostname:

hostname <name>

Then reboot the system.

The basic configuration is over.

Certificates

Theoretics

Cisco Meeting Server uses an encrypted connection between different components, therefore X.509 certificates are required for all CMS deployments, so servers and services know if they can trust each other.

Each service needs a certificate, but creating a separate certificate for each service may lead to confusion and excessive complexity. Fortunately, we can generate a public and private certificate key pair and then use it for several services. In our case, one and the same certificate will be used for Call Bridge, XMPP server, Web Bridge and Web Admin. So, we only have to create a public/private key pair for each server in a cluster.

Database clustering, however, has special requirements to the certificates, so it needs separate certificates that are different from the other services’ certificates. CMS utilizes a server certificate that is similar to other servers’ certificates, but there also is a client certificate to be used for database connections. Database certificates are used for authentication and encryption both. Instead of providing a username and password to connect a client to the database, it provides a client certificate that is trusted by the server. Each server in a database cluster uses one and the same public/private key pair. So, any server in a cluster can create encrypted data that can be decrypted by any other server that uses the same key pair.

In order for reservation to work properly, a database cluster should consist of three servers at least and five at most, with maximum signal transmission time of 200 ms between any two nodes. This limit is more restrictive than Call Bridge limits, so it usually becomes the limiting factor in geographically distributed deployments.

CMS database role has several specific requirements. Unlike other roles, it requires client and server certificates, with client certificate having the CN field specified to be presented to the server.

CMS uses postgres database with one main server and several identical replications. Every single moment there is only one main database (“database server”). Other nodes are replications or “database clients”.

A database cluster requires a dedicated server certificate and a client certificate. They must be signed, usually by an internal private CA. Since any node in a database cluster can become the main one, the certificates for database server and client (public/private key pairs) must be copied on each server, so it can become a database client or server. Besides that, the root CA certificate must be uploaded, so the client and server certificates can be verified.

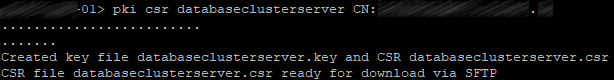

Certificate Requests

So, use the following command to create a certificate request for all server services except database (it will require a separate request):

pki csr hostname CN:cms.example.com

subjectAltName:hostname.example.com,example.com,conf.example.com,join.example.com

CN should contain the common name of our servers. For example, if the hostnames are: server01, server02, server03, the CN should be server.example.com.

Do the same for both other servers with the corresponding hostnames used in commands.

Create two request for the certificates that will be used by the database service:

pki csr dbclusterserver CN:hostname1.example.com

subjectAltName:hostname2.example.com,hostname3.example.com

pki csr dbclusterclient CN:postgres

where dbclusterserver and dbclusterclient are the names of our request and future certificates, and hostname1(2)(3) are the corresponding server names.

Perform this procedure on one server only, and upload the certificates and corresponding .key files to the other servers.

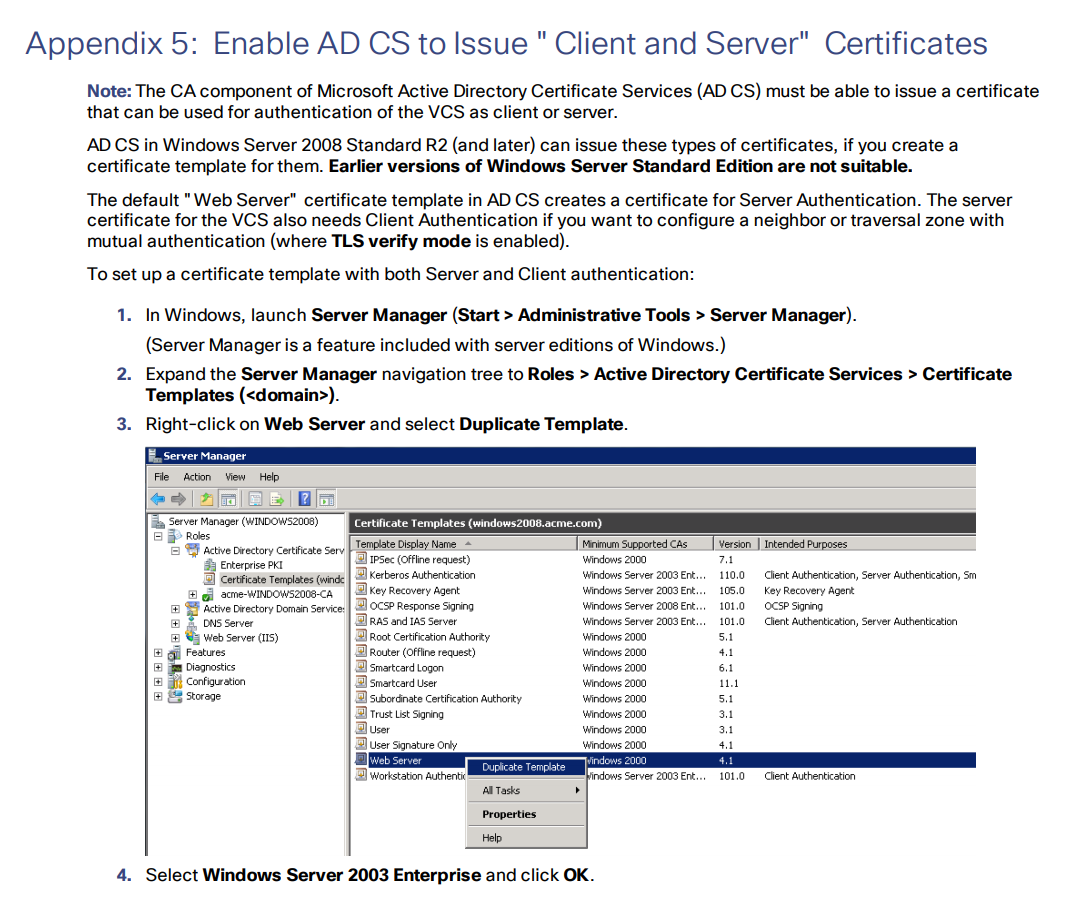

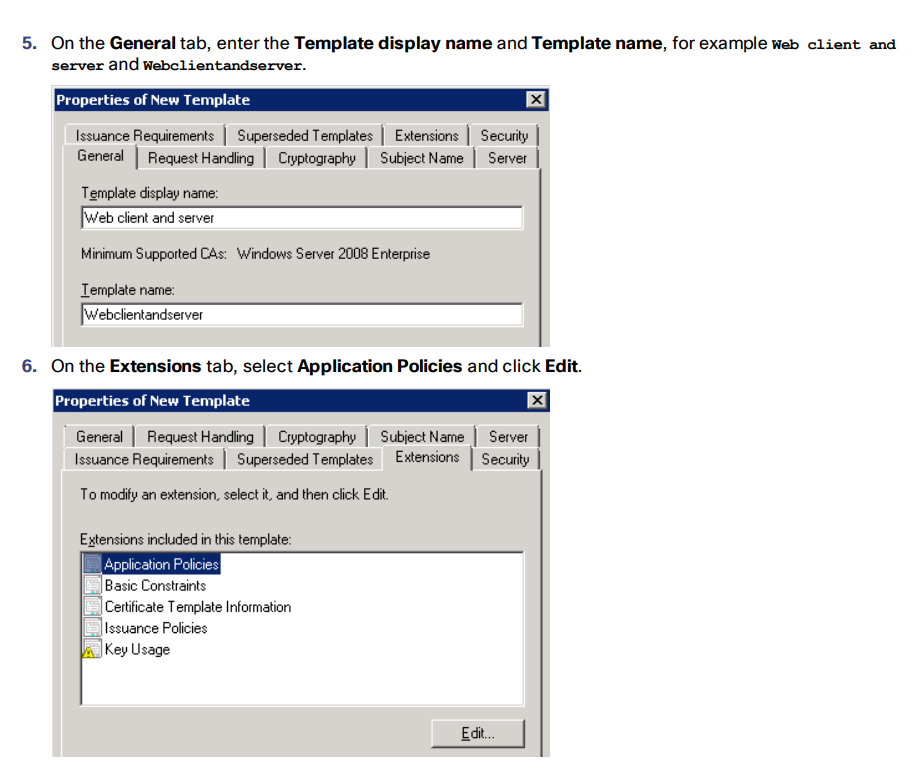

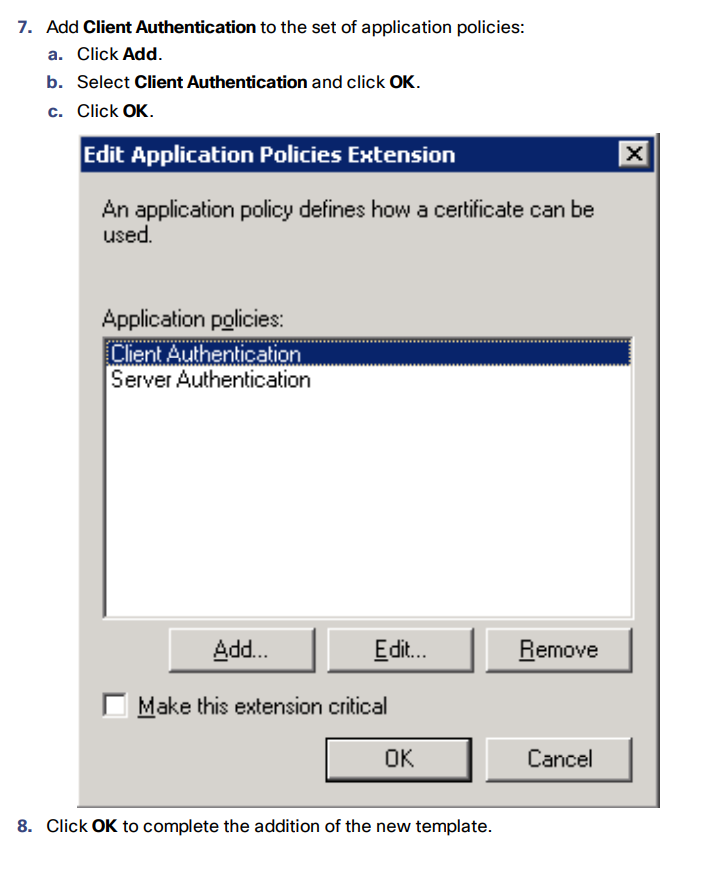

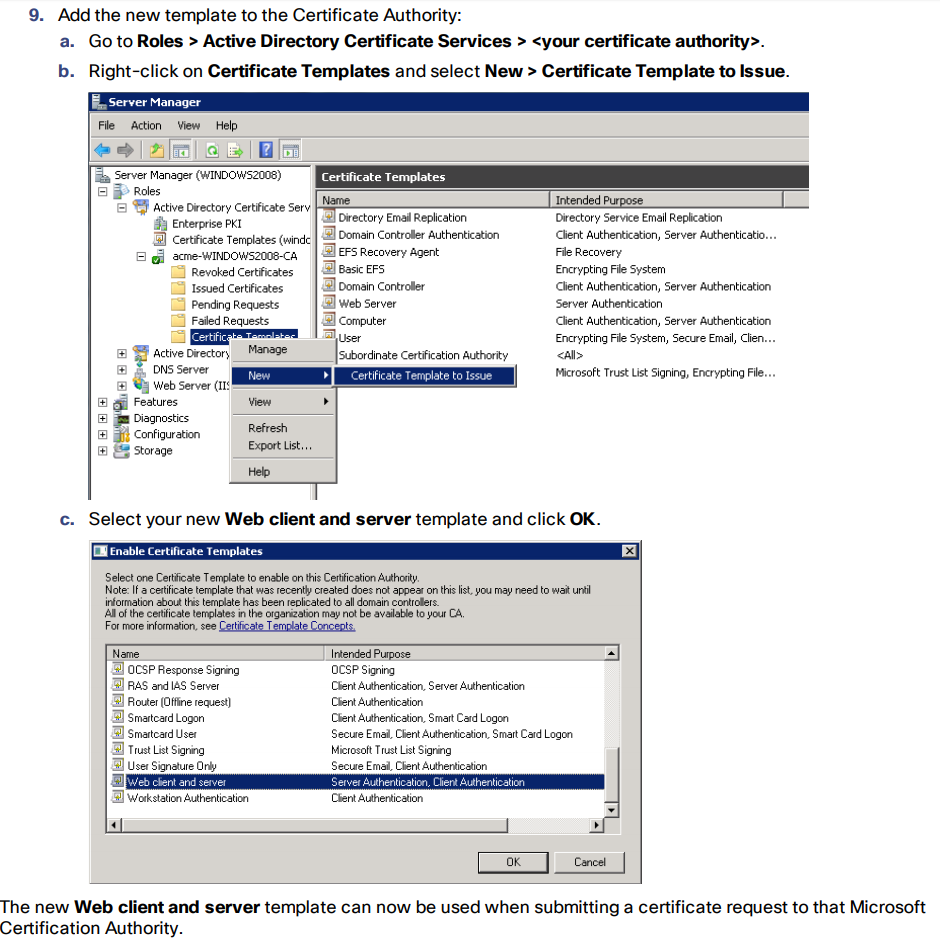

Enable AD CS to Issue “Client and Server” Certificates

Merge Certificates

Merge all servers’ certificates into one file:

In *NIX:

cat server01.cer server02.cer server03.cer > server.cer

In Windows/DOS:

copy server01.cer + server02.cer + server03.cer server.cer

And upload the following files to each server:

- The server's certificate.

- Root certificate (with intermediate ones if they exist).

- Database certificates (“server” and “client” ones) and .key files that have been created upon the request for “server” and “client” database certificates. These are the same files for all servers.

- All servers’ certificates.

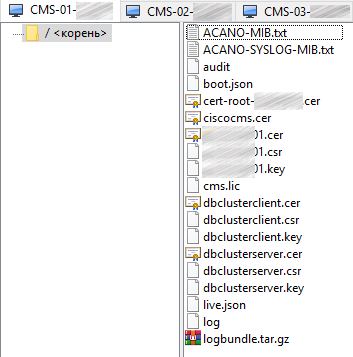

You should get a similar set of files on each server:

In the next part of this article we’ll continue discussing the details of CMS clustered deployment.

Read also:

- Part 1 - Cisco Meeting Server Cluster: Scalability and Resilience deployment with meeting recording

- Part 2 - Cisco Meeting Server Cluster: Scalability and Resilience deployment with meeting recording

- Part 3 - Cisco Meeting Server Cluster: Scalability and Resilience deployment with meeting recording

This article is a translation of a guide originally created by S. Dubinin, Telecommunications Specialist - https://habr.com/ru/post/434240/